📬 Remix Reality Insider: The Case for Human-Centered Medical AI

Your weekly briefing on the systems, machines, and forces reshaping reality.

🛰️ The Signal

This week’s defining shift.

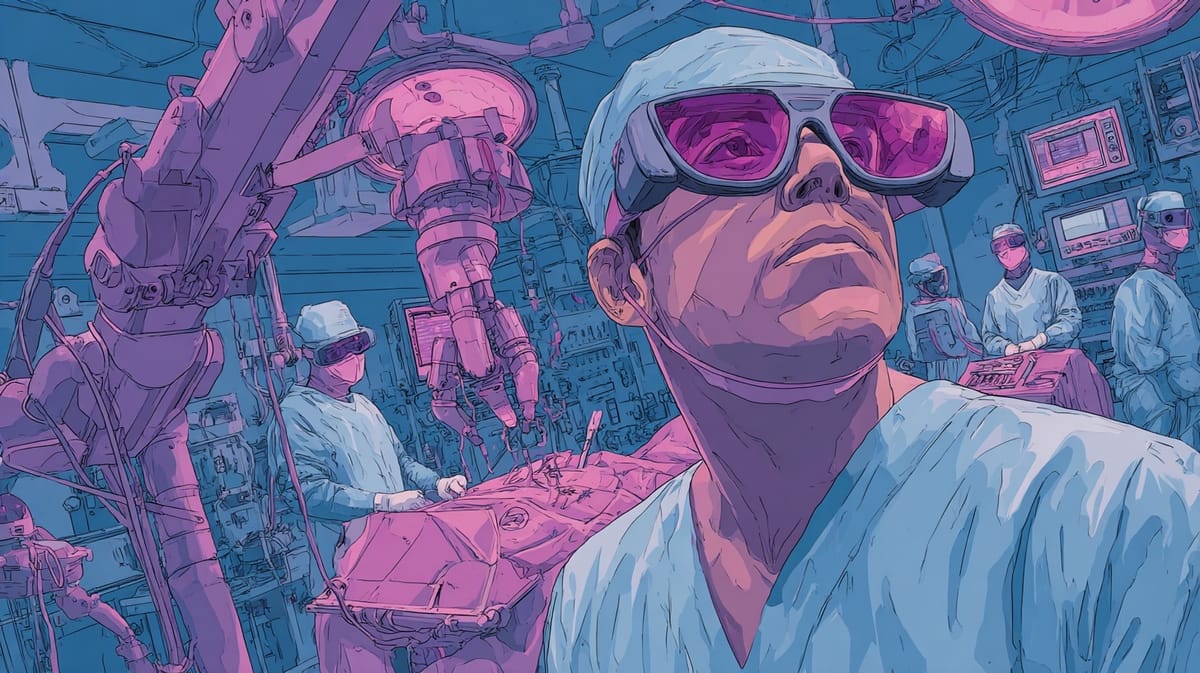

Human-centered systems are becoming the default design choice in medical AI and XR. Instead of trying to remove clinicians from the loop, these platforms are being built around how people actually work. The technology is designed to support human decisions and actions, not automate them away.

This week’s news surfaced signals like these:

- ORamaVR is raising and scaling its platform to improve human skills and reduce errors in surgery and clinical procedures through immersive, AI-driven training.

- Medtronic’s Hugo has now been used in its first U.S. commercial surgery, showing how the system is moving into real hospital workflows to extend what surgeons can do in the operating room.

- Augmedics’ xvision, through its exclusive deal with VB Spine, is being pushed into wider spine surgery use by putting guidance directly into the surgeon’s field of view during procedures.

Why this matters: In high-risk fields like medicine, progress is coming from systems that raise the floor of human performance. The winning stacks are not the ones that promise autonomy. They are the ones who treat people as a core part of the architecture and design around trust, training, and day-to-day use.

🧠 Reality Decoded

Your premium deep dive.

World models have become a big focus in physical AI for a practical reason. If a system is going to operate in the real world, it needs a safe place to learn and get tested before it ever does. Rather than relying only on what has already been recorded, teams are now building simulations that can create new situations on demand.

Waymo’s new World Model is the latest and clearest example of this shift. Built to generate interactive driving environments with camera and lidar outputs across controllable conditions like traffic, weather, and road layout, it turns simulation from playback into a core part of how autonomous systems are trained, tested, and trusted.

Three takeaways from Waymo’s world model:

- Coverage matters more than realism alone: Real footage is limited by what actually happens. World models expand the training and testing space to include rare, extreme, and safety-critical situations that would otherwise take years to encounter.

- Physical AI needs a place to fail safely: You cannot discover the limits of a driving system on public roads. World models create a sandbox where mistakes are cheap and learning is fast.

- The world can be tested as the car sees it: Because Waymo’s model generates both camera and lidar data, engineers can evaluate how the Driver behaves when its full sensing stack experiences the same situation, not just one sensor at a time.

Key Takeaway:

World models are becoming the backbone of physical AI. They let systems imagine more of the world than they have seen. This lets them try things, stress themselves, and run into problems before the real world ever does.

📡 Weekly Radar

Your weekly scan across the spatial computing stack.

📦 Kawasaki Introduces Compact 110kg Palletizing Robot with Hollow Wrist Design

- The CP110L introduces a compact palletizing robot with a hollow wrist design aimed at space-constrained installation environments.

- Why this matters: By routing cables internally, Kawasaki is targeting faster installation, easier maintenance, and reduced interference. These details matter in space-constrained palletizing environments.

🤖 Toyota Expands Use of Humanoid Robot Digit After Successful Pilot

- Toyota Motor Manufacturing Canada has signed a commercial agreement with Agility Robotics to deploy the humanoid robot Digit following a successful pilot.

- Why this matters: Moving from pilot to production is a big milestone. When a manufacturer moves a humanoid robot into standard operations, it reflects operational confidence, not experimentation.

🏪 VenHub Adds Self-Diagnosing Systems to Keep Autonomous Stores Running

- VenHub has introduced self-diagnostic capabilities across its robotic systems to reduce downtime and maintain continuous store operations.

- Why this matters: Self-diagnosing hardware shifts these stores from reactive maintenance to built-in resilience, which is what actually makes 24/7 automation viable at network scale.

🎮 Meta Splits Quest VR and Worlds as Horizon+ Tops 1 Million Subscribers

- Meta is separating its Quest VR platform from Worlds, shifting Worlds to focus almost exclusively on mobile while doubling down on third-party VR development.

- Why this matters: Meta is drawing a hard line between VR and mobile while signaling that subscriptions and in-app purchases are becoming central to Quest’s future.

📞 Vuzix Rolls Out Enterprise Smart Glasses Kits With Native Teams and Zoom

- Vuzix introduced Vuzix Solutions, a portfolio of integrated smartglasses kits that combine hardware and pre-configured software for enterprise deployment.

- Why this matters: By bundling glasses with native collaboration tools, Vuzix is lowering the friction that slows enterprise rollouts.

🏃 Omni One Joins Made for Meta Program, Targeting Millions of Quest Users

- Virtuix has joined the Made for Meta program and plans to make its Omni One treadmill compatible with Meta Quest headsets and games.

- Why this matters: Being part of the Made for Meta program gives Virtuix direct alignment with the largest installed base in XR, lowering friction for consumers who already own Quest headsets. Certified ecosystem status can also signal credibility, which matters when selling connected hardware into a fast-growing but still selective market.

⚙️ NORD Cuts Commissioning Timeline with Digital Twin and Virtual Simulation Tools

- NORD DRIVESYSTEMS now offers digital twin and virtual commissioning capabilities that cut the process from configuration to commissioning from several months to a few weeks.

- Why this matters: This is about collapsing the gap between engineering and operations. If the control logic works before hardware ships, the factory floor stops waiting on integration.

🐘 Waymo Builds a World Model to Simulate the “Impossible” on the Road

- Waymo unveiled the Waymo World Model, a generative simulation system built on Google DeepMind’s Genie 3 to create hyper-realistic 3D driving environments.

- Why this matters: Moving from replaying recorded drives to generating entirely new edge cases on demand gives Waymo a way to systematically stress-test rare and complex scenarios before they ever unfold on real roads.

🌀 Tom's Take

Unfiltered POV from the editor-in-chief.

This week, I have been thinking a lot about filters and lenses, especially as I’ve had a lot more Zoom calls lately with a heavy dose of the beauty feature on. Today’s AR filters are an early glimpse of what could become a killer feature of augmented reality glasses. They show that we can change how we experience reality in real time. Right now, AR mostly alters what happens on a screen. But with glasses you wear every day, that layer becomes constant, something you can apply anywhere and at any time.

Once you can do that, you can experience the world in an almost endless number of ways. Mundane things can suddenly become extraordinary. Dogs can become pink elephants. People can become aliens. Your street in modern-day San Francisco can turn into a page from its history books. And this does not have to stop with what you see. The same idea could apply to sound. I’ve always imagined how amazing it could be to walk through a city and hear the conversations around you as if they were a musical number. The sky really is the limit when the interface is your entire field of view.

But as magical as this sounds, a world where everyone can choose their own version of reality creates a real challenge. Today, we mostly agree on what we are looking at. We agree that this is a tree, that the leaves are green, and that there is a road beyond it, for example. That shared understanding is what lets us communicate, collaborate, and stay (mostly) on the same page. When everyone starts seeing a different version of the same place, that common reference point starts to slip.

So the real question becomes: what will the new ground truth be?

Remixing reality is a superpower of immersive technology. The work ahead is figuring out how to use that power without losing the shared world we still need in order to understand each other and move through it together.

🔮 What’s Next

3 signals pointing to what’s coming next.

- Enterprise AR as turnkey infrastructure

Vuzix's new enterprise kits bundle its glasses with native Teams and Zoom instead of leaving companies to wire everything together. That cuts out a lot of the setup work that slows deployments and makes smart glasses look more like tools you can roll out. - 24/7 automation needs a nervous system

VenHub’s robotic stores run without staff on site, and the company is adding self-diagnostics and predictive monitoring so parts can fail without shutting everything down. That kind of built-in awareness is what makes 24/7 autonomous retail workable at scale. - Robots designed to work with people, not around them

Toyota is moving Agility Robotics' Digit out of a pilot and into everyday factory, logistics, and supply chain work under a service agreement. This puts a general-purpose humanoid into real workplaces, alongside people, doing repetitive and physically demanding jobs instead of staying in isolated automation zones.

Know someone who should be following the signal? Send them to remixreality.com to sign up for our free weekly newsletter.

📬 Make sure you never miss an issue! If you’re using Gmail, drag this email into your Primary tab so Remix Reality doesn’t get lost in Promotions. On mobile, tap the three dots and hit “Move to > Primary.” That’s it!

🛠️ This newsletter uses a human-led, AI-assisted workflow, with all final decisions made by editors.