AGIBOT Pushes World Models Into Interactive Simulation With Genie Envisioner 2.0

- AGIBOT updated Genie Envisioner 2.0 to turn its world models into interactive simulators where robots can act and learn.

- The system uses a World Action Model to link actions and outcomes, enabling training and evaluation inside generated environments instead of relying only on real-world data.

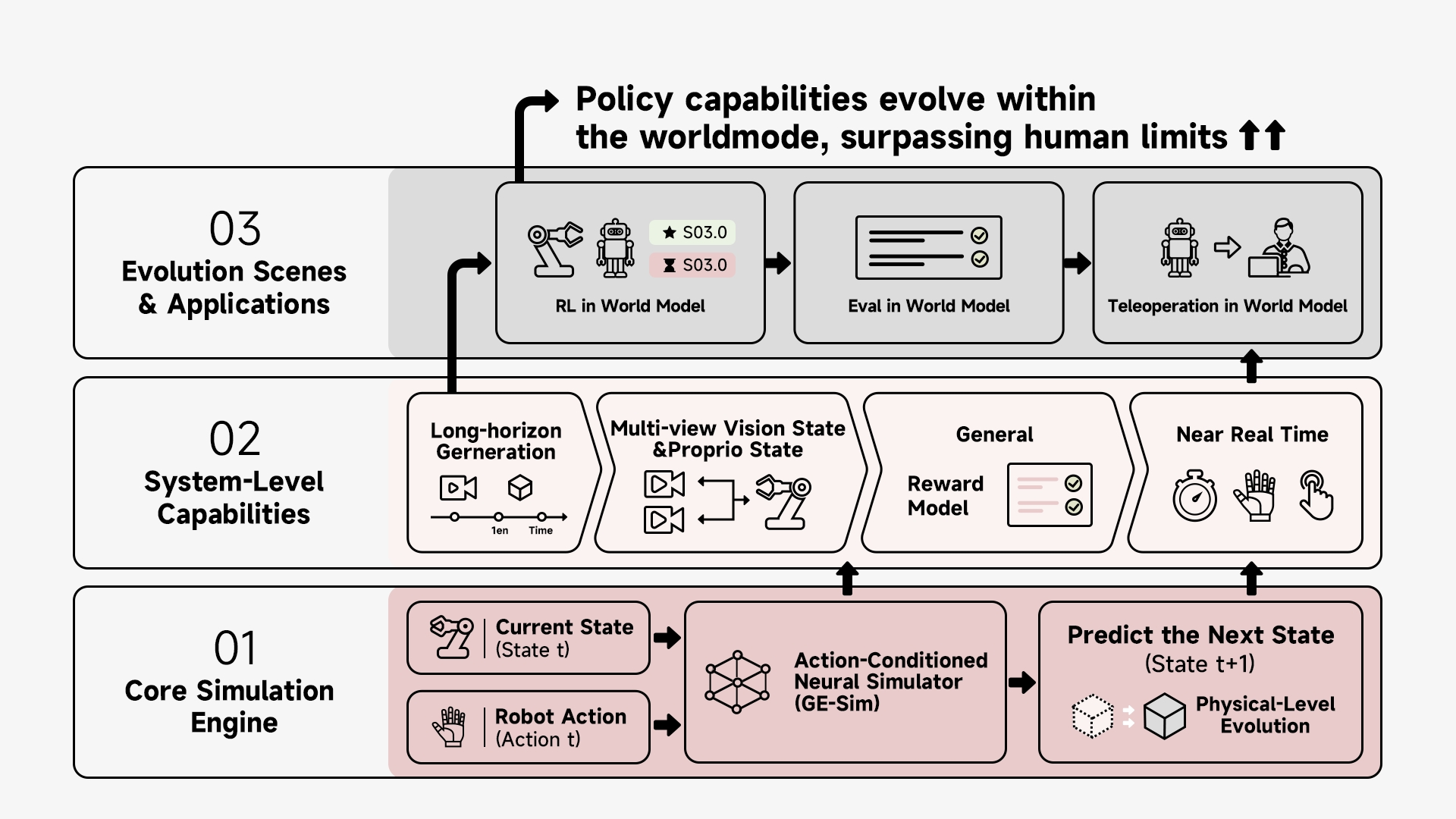

AGIBOT has debuted a major update to Genie Envisioner, its world models platform. The update moves the system from describing the world to running it as a simulation. It builds on the World Action Model, linking state, action, and outcome so changes unfold step by step. This allows robots to act inside model-generated environments that respond to their actions, supporting training and evaluation without relying only on real-world testing.

Genie Envisioner 2.0 brings these capabilities into an operational simulation system. The environment updates as robots take actions, remains stable over longer sequences, and keeps visual and spatial elements consistent. It includes a built-in reward model for evaluation and supports evaluation, reinforcement learning, and operation within the system, along with tools for simulation, benchmarking, and working with real and generated data.

The update also adds tools that predict how the environment will change after a robot takes an action, measure whether those actions are correct, and expand training data by editing and generating new scenarios from real-world inputs. This makes the system usable as a place where robots can be tested and trained without leaving the simulation.

🌀 Tom’s Take:

This update turns world models into places where robots can practice, not just predict what might happen. Instead of needing more real-world trials, progress depends on how accurate and responsive the simulated environment is.

Source: AGIBOT